Benchmarking the AWS Graviton2 processors

There's been a lot of fanfare about the new AWS Graviton2 processors, and the performance and cost benefits of using them compared to their alternatives in the cloud.

These ARM-based processors power the new M6g, C6g, and R6g family of EC2 instance types, with the previous (first) generation powering the A1 family of EC2 instances. AWS promises that the Graviton2 offers significant improvements over the current 5th gen x86 EC2 mainstays. (20% lower cost and 40% higher performance, based on AWS' own internal testing, according to the AWS Graviton2 landing page.)

So, I wanted to have a bit of fun and do some light benchmarking myself.

Methodology

I wanted to gather as many data points as I could without spending too much effort on it, so I created a bash script that's meant to be run as an EC2 instance's user data. I've always thought that the naming was pretty off --- EC2 user data scripts execute on launch.

You can take a look at the script here. It will essentially do the following:

- Gather some information from the instance metadata,

- Install

sysbenchand the AWS CLI, - Set the instance to terminate when it's shut down (instead of just stopping),

- In sets of powers of 2, for the available cores in the machine:

- Run benchmarking tests,

- Format and append the results into a CSV file

- Upload the results to an Amazon S3 bucket,

- Shutdown the instance (effectively terminating it)

To automate the entire process, I created an EC2 Launch Template that uses this user data script, and created an EC2 Autoscaling Group from it. The EC2 instances will run the benchmark tests and terminate themselves, and the autoscaling groups will happily create new instances to replace them. Left those running for a day or so, just collecting results on S3.

Once the data points are ready, accessing them is simply just a matter of using AWS Glue and Amazon Athena to run some SQL queries directly on the CSV files to crunch the numbers.

Results

After a weekend of running the benchmarks, I managed to get the sample pool below.

I wanted to get benchmarking results for when all the cores in the instance are used together, as well as when constraining the testing to just a single core. All the instance types I used have 2 cores, with varying levels of memory. If you use the user data script above for your own testing, however, it should also test for other core combinations (e.g. 1, 2, 4, 8, etc).

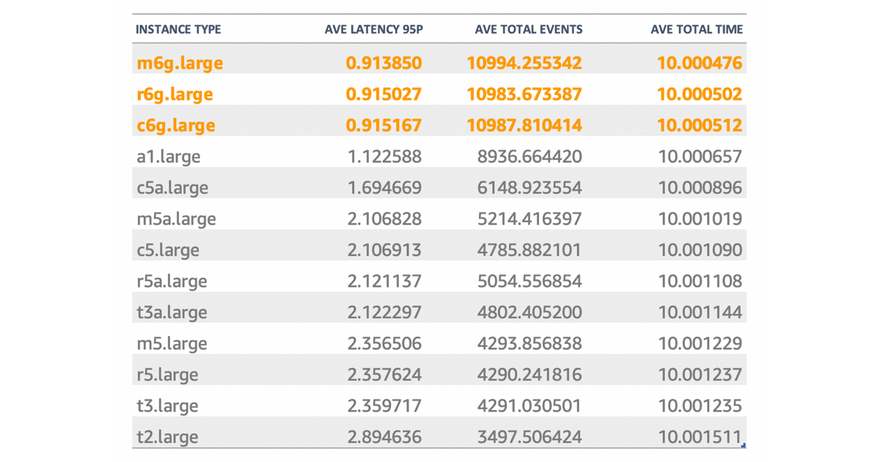

These are the summarized results from the single-thread benchmark tests:

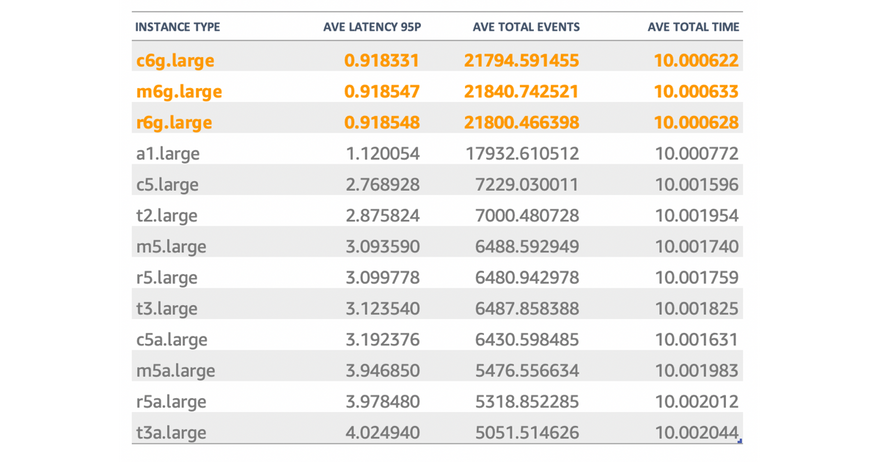

And these are the summary from the multi-thread benchmark tests (all of which effectively have 2 threads):

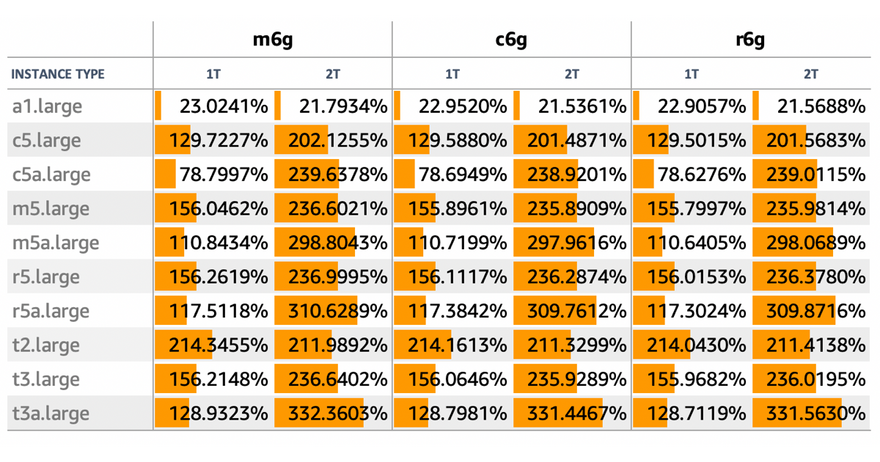

I had a few minutes to spare, so I went ahead and looked at how much improvement each Graviton2 instance gave over each other non-Graviton2 option:

For really quick, shallow numbers, I think these are definitely quite telling. Granted, the real litmus test of any new tech is on their application to real scenarios (and not just tests like these that lack context), but I'd say that the numbers as they are are indicative that the Graviton2 processors definitely offer pronounced improvements over the other options.

It'd be nice to find some time to be able to run an applied benchmarking test, like how KeyDB did theirs.